The IS Student Research Symposium provides a forum for students across all programs of the Department of Information Systems to showcase their ongoing or completed research, exchange research ideas and sharpen their presentation skills while competing for awards. The competition accepts submissions on a broad range of topics relevant to the field of information systems. Student research is a very important part of our program as it prepares students for future careers and helps build problem-solving skills.

Our 2023 IS Student Research Symposium was open to students in all programs managed by the Department of Information Systems (undergraduate students in BTA/IS program, MS or PhD graduate students in IS/HCC program, IS online program, Health IT and Software Engineering MPS program, and postdoctoral researchers). We received 32 poster submissions in 2023 symposium and around 60 people (including faculty, students, collaborators and advisory board members) attended the event. Below are the participants and award winners from our 2023 IS Student Research Symposium, which includes both virtual and face-to-face events.

Congratulations contest winners.

Photos from the event are at the bottom of this webpage.

PhD Student Research Awards

Zahid Hasan

Title: Generalized discriminative model learning from both known and unknown categories

Abstract: Novel Categories Discovery (NCD) facilitates learning from a partially annotated label space and enables deep learning (DL) models to operate in an open-world setting by identifying and differentiating instances of novel classes based on the labeled data notions. One of the primary assumptions of NCD is that the novel label space is perfectly disjoint and can be equipartitioned, but it is rarely realized by most NCD approaches in practice. To better align with this assumption, we propose a novel single-stage joint optimization-based NCD method, Negative learning, Entropy, and Variance regularization NCD (NEV-NCD). We demonstrate the efficacy of NEV-NCD in previously unexplored NCD applications of video action recognition (VAR) with the public UCF101 dataset and a curated in-house partial action-space annotated multi-view video dataset. Further, we perform a thorough ablation study by varying the composition of final joint loss and associated hyper-parameters. During our experiments with UCF101 and multi-view action dataset, NEV-NCD achieves ≈ 83% classification accuracy in test instances of labeled data. NEV-NCD achieves ≈ 70% clustering accuracy over unlabeled data outperforming both naive baselines and state-of-the-art pseudo-labeling-based approaches by ≈ 40% and ≈ 3.5% over both datasets.

Abstract: Novel Categories Discovery (NCD) facilitates learning from a partially annotated label space and enables deep learning (DL) models to operate in an open-world setting by identifying and differentiating instances of novel classes based on the labeled data notions. One of the primary assumptions of NCD is that the novel label space is perfectly disjoint and can be equipartitioned, but it is rarely realized by most NCD approaches in practice. To better align with this assumption, we propose a novel single-stage joint optimization-based NCD method, Negative learning, Entropy, and Variance regularization NCD (NEV-NCD). We demonstrate the efficacy of NEV-NCD in previously unexplored NCD applications of video action recognition (VAR) with the public UCF101 dataset and a curated in-house partial action-space annotated multi-view video dataset. Further, we perform a thorough ablation study by varying the composition of final joint loss and associated hyper-parameters. During our experiments with UCF101 and multi-view action dataset, NEV-NCD achieves ≈ 83% classification accuracy in test instances of labeled data. NEV-NCD achieves ≈ 70% clustering accuracy over unlabeled data outperforming both naive baselines and state-of-the-art pseudo-labeling-based approaches by ≈ 40% and ≈ 3.5% over both datasets.

Seraj Mostafa

Title: CNN based Ocean Eddy detection using Cloud Services

Abstract: This study aims to detect Ocean Eddy using Convolutional Neural Network (CNN) in the cloud platform. In particular, the objective is to identify small scale (<20km) ocean eddies from satellite remote images, and identify the feasibility of streamlining the workflow using SageMaker and Elastic Compute Cloud (EC2), the cloud-based tools offered by AWS (Amazon Web Service) platform. The purpose is to make the services available as an application in the climate change domain using cloud services. Machine Learning as a Service (MLaaS) is a common cloud based application that requires in advance data preprocessing, labeling and manual model deployments. Additionally, cloud platforms also lack auto-scalability and storage availability for incremental data. SageMaker is a one stop solution to deploy end-to-end MLaaS solutions by mitigating these challenges. EC2 also offers configurable compute optimized virtual workstations with industry level deployment of services. In this report we highlight CNN-based Ocean Eddy detection using cloud services where the proposed approach achieved 95.71% training accuracy and 94.16% validation accuracy. We also compare SageMaker and EC2 in terms of usability, performance, cost and climate change research. Our analysis shows that SageMaker and EC2 are highly capable of building CNN-based services. Additionally, We point out the potentials of using these services, their limitations and challenges related to timeline, cost, resource availability and the ease of use for deployment of models.

Abstract: This study aims to detect Ocean Eddy using Convolutional Neural Network (CNN) in the cloud platform. In particular, the objective is to identify small scale (<20km) ocean eddies from satellite remote images, and identify the feasibility of streamlining the workflow using SageMaker and Elastic Compute Cloud (EC2), the cloud-based tools offered by AWS (Amazon Web Service) platform. The purpose is to make the services available as an application in the climate change domain using cloud services. Machine Learning as a Service (MLaaS) is a common cloud based application that requires in advance data preprocessing, labeling and manual model deployments. Additionally, cloud platforms also lack auto-scalability and storage availability for incremental data. SageMaker is a one stop solution to deploy end-to-end MLaaS solutions by mitigating these challenges. EC2 also offers configurable compute optimized virtual workstations with industry level deployment of services. In this report we highlight CNN-based Ocean Eddy detection using cloud services where the proposed approach achieved 95.71% training accuracy and 94.16% validation accuracy. We also compare SageMaker and EC2 in terms of usability, performance, cost and climate change research. Our analysis shows that SageMaker and EC2 are highly capable of building CNN-based services. Additionally, We point out the potentials of using these services, their limitations and challenges related to timeline, cost, resource availability and the ease of use for deployment of models.

PhD/Postdoc Completed Research Track

1st Place: Kirk Crawford

Title: Complex Dynamics: Disability, Assistive Technology, and the LGBTQIA+ Community Center Experience

Abstract: In this study, we explore the experiences of LGBTQIA+ individuals with disabilities in community centers, with a focus on the role of assistive technology (AT). Our research addresses three key questions: (1) How do LGBTQIA+ individuals with disabilities navigate intersecting identities in community centers? (2) How do social connections impact AT use in these environments? (3) How do community center norms and structures influence AT use? Through 11 semi-structured interviews, we examine the challenges and barriers faced by people with disabilities, their motivations for visiting centers, the impact of social dynamics, structures, and norms on AT use, and the importance of social connections. To address these challenges and foster lasting change, we offer recommendations for designing more inclusive and affordable AT, nurturing interdependence, and promoting collaboration between LGBTQIA+ community centers and disability organizations.

Abstract: In this study, we explore the experiences of LGBTQIA+ individuals with disabilities in community centers, with a focus on the role of assistive technology (AT). Our research addresses three key questions: (1) How do LGBTQIA+ individuals with disabilities navigate intersecting identities in community centers? (2) How do social connections impact AT use in these environments? (3) How do community center norms and structures influence AT use? Through 11 semi-structured interviews, we examine the challenges and barriers faced by people with disabilities, their motivations for visiting centers, the impact of social dynamics, structures, and norms on AT use, and the importance of social connections. To address these challenges and foster lasting change, we offer recommendations for designing more inclusive and affordable AT, nurturing interdependence, and promoting collaboration between LGBTQIA+ community centers and disability organizations.

2nd Place: Omar Faruque

Title: Deep Spatiotemporal Clustering: A Temporal Clustering Approach for Multi-dimensional Climate Data

Abstract: Clustering high-dimensional spatiotemporal data using an unsupervised approach is a challenging problem for many data-driven applications. Existing state-of-the-art methods for unsupervised clustering use different similarity and distance functions but focus on either spatial or temporal features of the data. Concentrating on joint deep representation learning of spatial and temporal features, we propose Deep Spatiotemporal Clustering (DSC), a novel algorithm for the temporal clustering of high-dimensional spatiotemporal data using an unsupervised deep learning method. Inspired by the U-net architecture, DSC utilizes an autoencoder integrating CNN-RNN layers to learn latent representations of the spatiotemporal data. DSC also includes a unique layer for cluster assignment on latent representations that uses the Student’s t-distribution. By optimizing the clustering loss and data reconstruction loss simultaneously, the algorithm gradually improves clustering assignments and the nonlinear mapping between low-dimensional latent feature space and high-dimensional original data space. A multivariate spatiotemporal climate dataset is used to evaluate the efficacy of the proposed method. Our extensive experiments show our approach outperforms both conventional and deep learning-based unsupervised clustering algorithms. Additionally, we compared the proposed model with its various variants (CNN encoder, CNN autoencoder, CNN-RNN encoder, CNN-RNN autoencoder, etc.) to get insight into using both the CNN and RNN layers in the autoencoder, and our proposed technique outperforms these variants in terms of clustering results.

Abstract: Clustering high-dimensional spatiotemporal data using an unsupervised approach is a challenging problem for many data-driven applications. Existing state-of-the-art methods for unsupervised clustering use different similarity and distance functions but focus on either spatial or temporal features of the data. Concentrating on joint deep representation learning of spatial and temporal features, we propose Deep Spatiotemporal Clustering (DSC), a novel algorithm for the temporal clustering of high-dimensional spatiotemporal data using an unsupervised deep learning method. Inspired by the U-net architecture, DSC utilizes an autoencoder integrating CNN-RNN layers to learn latent representations of the spatiotemporal data. DSC also includes a unique layer for cluster assignment on latent representations that uses the Student’s t-distribution. By optimizing the clustering loss and data reconstruction loss simultaneously, the algorithm gradually improves clustering assignments and the nonlinear mapping between low-dimensional latent feature space and high-dimensional original data space. A multivariate spatiotemporal climate dataset is used to evaluate the efficacy of the proposed method. Our extensive experiments show our approach outperforms both conventional and deep learning-based unsupervised clustering algorithms. Additionally, we compared the proposed model with its various variants (CNN encoder, CNN autoencoder, CNN-RNN encoder, CNN-RNN autoencoder, etc.) to get insight into using both the CNN and RNN layers in the autoencoder, and our proposed technique outperforms these variants in terms of clustering results.

PhD/Postdoc Work-in-progress Research Track

1st Place: Uzma Hasan

Title: Can Edge Constraints Guide Causal Structure Learning?

Abstract: Causal discovery enables the estimation of causal relationships from observational data. However, learning causal relationships solely from observational data provides insufficient information about the underlying causal mechanism and the search space of possible graphs. As a result, often the search space can grow exponentially large particularly for approaches that use a greedy score-based technique to search the space of equivalence classes of graphs. Prior causal information such as the presence or absence of a causal edge can be leveraged to guide the discovery process towards a more restricted and accurate search space. Thus, in this study, we present a knowledge-guided score-based causal discovery approach that uses observational data and structural priors (causal edges) as constraints to learn the causal graph. Our approach provides a novel way of applying the available constraints, and can leverage any of the following prior edge information between any two variables: the presence of a directed edge, the absence of an edge, and the presence of an undirected edge. We extensively evaluate the proposed approach across multiple settings in both synthetic and benchmark real-world datasets. Our experimental results demonstrate that structural priors of any type and amount are helpful and guide the search process towards an improved performance and early convergence.

Abstract: Causal discovery enables the estimation of causal relationships from observational data. However, learning causal relationships solely from observational data provides insufficient information about the underlying causal mechanism and the search space of possible graphs. As a result, often the search space can grow exponentially large particularly for approaches that use a greedy score-based technique to search the space of equivalence classes of graphs. Prior causal information such as the presence or absence of a causal edge can be leveraged to guide the discovery process towards a more restricted and accurate search space. Thus, in this study, we present a knowledge-guided score-based causal discovery approach that uses observational data and structural priors (causal edges) as constraints to learn the causal graph. Our approach provides a novel way of applying the available constraints, and can leverage any of the following prior edge information between any two variables: the presence of a directed edge, the absence of an edge, and the presence of an undirected edge. We extensively evaluate the proposed approach across multiple settings in both synthetic and benchmark real-world datasets. Our experimental results demonstrate that structural priors of any type and amount are helpful and guide the search process towards an improved performance and early convergence.

2nd Place: Xiangxiang Kong

Title: Towards Continuous and Efficient Detection of Paroxysmal Sympathetic Hyperactivity (PSH) Episodes among Traumatic Brain Injury Patients: Exploring Human-in-the-loop Anomaly Detection Approach

Abstract: Paroxysmal Sympathetic Hyperactivity (PSH) is a state of excessive autonomic overactivity that occurs in about a third of Traumatic Brain Injury (TBI) patients in neurocritical care and is associated with worse outcomes. It has become increasingly recognized as a potential source of secondary injury in critically ill TBI patients. Therefore, it is of clinical interest to monitor PSH symptoms for early detection and proactive treatment. Though researchers have explored Paroxysmal Sympathetic Hyperactivity Assessment Measure (PSH-AM) as a quantitative measure for PSH diagnosis and symptom severity characterization, the calculation of PSH-AM is largely based on manual extraction of daily vital signs which is not only inefficient but may miss the opportunity to capture the intra-day dynamic patterns of PSH episodes. The ultimate goal of this project is to develop efficient approaches to automatically detect and characterize PSH episodes. To this end, we formulate it as a human-in-the-loop anomaly detection problem where machine and human iteratively define and refine a set of physiological patterns for PSH episodes. This approach is motivated by the nature of PSH episodes: vaguely defined with heterogeneous manifestation, and with expensive annotation cost if completely relying on humans. In this study, we describe the initial step of the human-in-the-loop anomaly detection approach where we derive a few labeling functions or rules from only a few annotated episodes, which is then used to automatically detect PSH episodes from about 3000 patient days of multivariate high-resolution vital sign time series collected from neural critical care patients. We demonstrate the utility of this approach by contrasting the patient-level PSH episode statistics between case and control patients.

Abstract: Paroxysmal Sympathetic Hyperactivity (PSH) is a state of excessive autonomic overactivity that occurs in about a third of Traumatic Brain Injury (TBI) patients in neurocritical care and is associated with worse outcomes. It has become increasingly recognized as a potential source of secondary injury in critically ill TBI patients. Therefore, it is of clinical interest to monitor PSH symptoms for early detection and proactive treatment. Though researchers have explored Paroxysmal Sympathetic Hyperactivity Assessment Measure (PSH-AM) as a quantitative measure for PSH diagnosis and symptom severity characterization, the calculation of PSH-AM is largely based on manual extraction of daily vital signs which is not only inefficient but may miss the opportunity to capture the intra-day dynamic patterns of PSH episodes. The ultimate goal of this project is to develop efficient approaches to automatically detect and characterize PSH episodes. To this end, we formulate it as a human-in-the-loop anomaly detection problem where machine and human iteratively define and refine a set of physiological patterns for PSH episodes. This approach is motivated by the nature of PSH episodes: vaguely defined with heterogeneous manifestation, and with expensive annotation cost if completely relying on humans. In this study, we describe the initial step of the human-in-the-loop anomaly detection approach where we derive a few labeling functions or rules from only a few annotated episodes, which is then used to automatically detect PSH episodes from about 3000 patient days of multivariate high-resolution vital sign time series collected from neural critical care patients. We demonstrate the utility of this approach by contrasting the patient-level PSH episode statistics between case and control patients.

MS Student Research Award

Md Fourkanul Islam

Title: MyPath: Identifying Surface Characteristics for Generating Personalized Accessible Routes for Wheelchair Users through Crowdsensing

Abstract: Individuals who have mobility impairments face various obstacles while moving around in familiar and unfamiliar places. These barriers can significantly hinder their ability to participate in their community, affect their quality of life, and restrict their independence. For instance, an inaccessible route can quickly become a major obstacle, preventing them from completing their planned trip or deterring future outings. Such unforeseen physical barriers may result in delayed arrival at their destination, exhaustion, or immense frustration. To make travel easier for individuals with mobility challenges, especially those who use wheelchairs, factors such as the presence of sidewalks and curb ramps, and the type of surface must be considered. Several approaches have been proposed to develop accessible routing solutions for wheelchair users. These solutions utilize data gathered from various smartphone sensors or crowd-sourcing to identify the location of barriers and facilities in the built environment. However, none of the existing solutions consider surface-vibration-based accessible path identification. We propose a new system called MyPath, which leverages machine learning techniques to learn the vibration patterns induced by different surfaces across the built environment. These patterns are then classified to provide an end-to-end accessible routing solution. We are also working on creating a three-dimensional computational model of accessibility that includes the user, wheelchair, and environment. This model will enable us to better understand how accessibility changes across different dimensions and provide personalized routing and navigation for MyPath users.

Abstract: Individuals who have mobility impairments face various obstacles while moving around in familiar and unfamiliar places. These barriers can significantly hinder their ability to participate in their community, affect their quality of life, and restrict their independence. For instance, an inaccessible route can quickly become a major obstacle, preventing them from completing their planned trip or deterring future outings. Such unforeseen physical barriers may result in delayed arrival at their destination, exhaustion, or immense frustration. To make travel easier for individuals with mobility challenges, especially those who use wheelchairs, factors such as the presence of sidewalks and curb ramps, and the type of surface must be considered. Several approaches have been proposed to develop accessible routing solutions for wheelchair users. These solutions utilize data gathered from various smartphone sensors or crowd-sourcing to identify the location of barriers and facilities in the built environment. However, none of the existing solutions consider surface-vibration-based accessible path identification. We propose a new system called MyPath, which leverages machine learning techniques to learn the vibration patterns induced by different surfaces across the built environment. These patterns are then classified to provide an end-to-end accessible routing solution. We are also working on creating a three-dimensional computational model of accessibility that includes the user, wheelchair, and environment. This model will enable us to better understand how accessibility changes across different dimensions and provide personalized routing and navigation for MyPath users.

Undergraduate Student Research Award

Mikayla Hopper

Title: The Evolution of Quantum-Safe VPNs

Abstract: Quantum computing is a revolutionary technology that uses quantum mechanics to perform complex computations well beyond the scope of traditional computing. While this is an amazing new wave of technology, quantum computing also poses an unprecedented threat to cyber security. Attacks with quantum computing are a huge threat to VPNs because they can break existing public-key encryption algorithms such as Diffie Hellman, in polynomial time. Post-Quantum Computing (PQC) is the preparation and set of standards of cryptology that need to be developed in order to ensure existing encryption techniques are changed to withstand the threat of quantum attacks.

Abstract: Quantum computing is a revolutionary technology that uses quantum mechanics to perform complex computations well beyond the scope of traditional computing. While this is an amazing new wave of technology, quantum computing also poses an unprecedented threat to cyber security. Attacks with quantum computing are a huge threat to VPNs because they can break existing public-key encryption algorithms such as Diffie Hellman, in polynomial time. Post-Quantum Computing (PQC) is the preparation and set of standards of cryptology that need to be developed in order to ensure existing encryption techniques are changed to withstand the threat of quantum attacks.

It is important that VPNs remain secure to continue to protect user data. Wireguard VPN is a popular software among Linux users, with many other commercial VPNs supporting their protocols (including Surfshark, NordVPN, and Mozilla VOB). Wireguard is not quantum-safe by default — they employ a Curve25519 cryptographic algorithm (an elliptic curve variation for ECDH) with ChaCha20 for symmetric encryption. In order to become post-quantum secure, Wireguard, and many VPNs that mirror its structure, will need to adopt a completely new handshake protocol to maintain the same level of security, such as Kyber.

In this ongoing research, we are interested in 1) identifying software vulnerabilities of quantum attacks in VPNs, and 2) evolving existing VPN encryption to hybrid PQC to achieve both quantum and classic protection. Our proposed handshake model implements the Kyber method before the ECDH handshake takes place. This extra handshake layer will hinder a sophisticated quantum attack with its frequently-updated protection parameters. This pilot study will be extended by adopting automated methods and tools to detect vulnerabilities of quantum attacks.

Honorable Mentions

Louis Lapp (Baltimore Polytechnic Institute)

Title: Integrating Fourier Transform and Residual Learning for Arctic Sea Ice Forecasting

Abstract: Arctic sea ice plays integral roles in both polar and global environmental systems, notably ecosystems, communities, and economies. As sea ice diminishes due to climate change, it has become imperative to accurately predict sea ice extent (SIE). Using the original dataset of Arctic oceanic and atmospheric variables spanning 1979 to 2021, we propose a sequential pipeline for predicting Arctic sea ice extent one month in advance. After a conditional detrender removed long-term linear trends from all variables, grid search tuned a novel composite Fourier Transform model that iteratively removed oscillation. Using grid search to optimize lags and hyperparameters, Gradient Boosting regressors were trained on baseline, detrended, and de-oscillated data, each achieving successively superior performance with RMSE, NRMSE, and R2 metrics. By outperforming current state-of-the-art, machine learning, and deep learning models, this study demonstrates the potential for employing Fourier transform-based pipelines in predicting Arctic SIE. Moreover, the extensive flexibility of this methodology has bolstered the performance of existing models, suggesting its broad applicability in time series prediction. Furthermore, this study may contribute to prediction tasks of related research and advise future adaptation, resilience, and mitigation efforts in response to Arctic sea ice decline.

Abstract: Arctic sea ice plays integral roles in both polar and global environmental systems, notably ecosystems, communities, and economies. As sea ice diminishes due to climate change, it has become imperative to accurately predict sea ice extent (SIE). Using the original dataset of Arctic oceanic and atmospheric variables spanning 1979 to 2021, we propose a sequential pipeline for predicting Arctic sea ice extent one month in advance. After a conditional detrender removed long-term linear trends from all variables, grid search tuned a novel composite Fourier Transform model that iteratively removed oscillation. Using grid search to optimize lags and hyperparameters, Gradient Boosting regressors were trained on baseline, detrended, and de-oscillated data, each achieving successively superior performance with RMSE, NRMSE, and R2 metrics. By outperforming current state-of-the-art, machine learning, and deep learning models, this study demonstrates the potential for employing Fourier transform-based pipelines in predicting Arctic SIE. Moreover, the extensive flexibility of this methodology has bolstered the performance of existing models, suggesting its broad applicability in time series prediction. Furthermore, this study may contribute to prediction tasks of related research and advise future adaptation, resilience, and mitigation efforts in response to Arctic sea ice decline.

Xander Dickens (Baltimore Polytechnic Institute)

Title: Comparative Analysis of Machine Learning Models for Predicting Arctic Sea Ice Extent

Abstract: Arctic sea ice extent (SIE) affects weather patterns throughout the world and is linked to climate change. Research has been done in order to create models that predict Arctic sea ice over time in order to hypothesize about the greater impacts of SIE changes. The challenges of predicting SIE have prompted researchers to develop machine learning models of varying complexity and scale. This research seeks to determine if ensemble models are better at predicting sea ice than simpler models, and also if only inputting variables that are causally linked to sea ice will increase the performance of a Long Short-Term Memory (LSTM) model. I compared three simple models to an Extreme Gradient Boosting (XGBoost) ensemble model and found that the simple models had an average RMSE of 8% while XGBoost had an average RMSE of 5%. Then, I used the PCMCI+ algorithm to determine which variables caused sea ice extent, and used this to create two deep-learning LSTM models, one with every input variable and one with only causal variables. This showed that including fewer, more important input variables increased model performance. This research indicates that complicated machine learning models are superior at predicting sea ice, and shows that using only causal variables can help improve model efficiency. Results can inform future researchers about the optimal types of models for SIE prediction, and contribute to current understanding of SIE’s global impacts.

Abstract: Arctic sea ice extent (SIE) affects weather patterns throughout the world and is linked to climate change. Research has been done in order to create models that predict Arctic sea ice over time in order to hypothesize about the greater impacts of SIE changes. The challenges of predicting SIE have prompted researchers to develop machine learning models of varying complexity and scale. This research seeks to determine if ensemble models are better at predicting sea ice than simpler models, and also if only inputting variables that are causally linked to sea ice will increase the performance of a Long Short-Term Memory (LSTM) model. I compared three simple models to an Extreme Gradient Boosting (XGBoost) ensemble model and found that the simple models had an average RMSE of 8% while XGBoost had an average RMSE of 5%. Then, I used the PCMCI+ algorithm to determine which variables caused sea ice extent, and used this to create two deep-learning LSTM models, one with every input variable and one with only causal variables. This showed that including fewer, more important input variables increased model performance. This research indicates that complicated machine learning models are superior at predicting sea ice, and shows that using only causal variables can help improve model efficiency. Results can inform future researchers about the optimal types of models for SIE prediction, and contribute to current understanding of SIE’s global impacts.

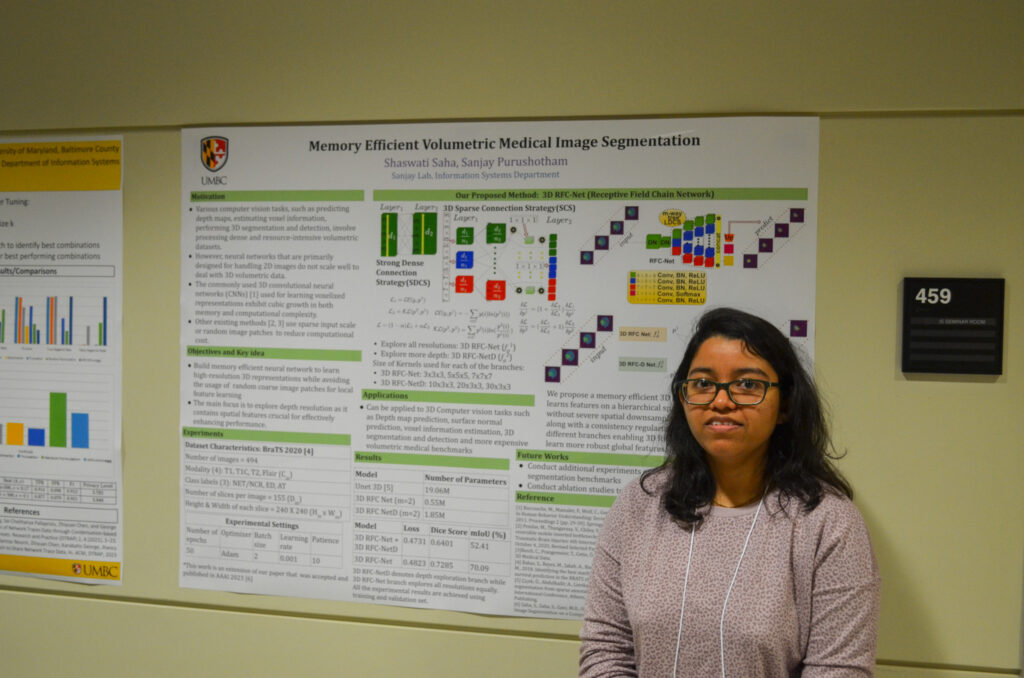

Selected photos from the 2023 Symposium

Previous Events

2022 IS Student Research Symposium